A few thoughts on the current state of LLMs

Introduction#

The software development industry is changing at a speed that most of us can barely keep up with. Regular day jobs and family life leave little time to rest, so trying to follow dozens of blogs and forums while simultaneously re-learning your profession is, understandably, stressful.

Here, I want to share a couple of personal realizations, along with one final thought on where I think the industry might be heading. I hope you enjoy it!

Models are getting pretty good#

I will start by stating the obvious: models like Claude Opus 4.6 and GPT-5.3-Codex are getting incredibly good. Give them a small, well-defined task, and they will one-shot it for you quite reliably. If they fail, they are usually only a prompt tweak or two away from your desired outcome.

You no longer need to memorize exact syntax, and you don’t need to spend hours manually parsing a new repository. They do the heavy lifting for you in record time, meaning your primary job shifts from writing every line to providing code review and your final seal of approval.

I think denying this reality as a software engineer is, at this point, a bold and dangerous stance.

Fear less non-determinism#

Okay, but what about non-determinism? How can we possibly rely on a tool that rarely outputs the exact same thing twice for a given input?

I was originally skeptical as well, but a specific realization changed my mind. It turns out that wrapping deterministic processes (e.g. automated testing) in human oversight is also non-deterministic. As a human, you might define the process incorrectly from the start, for example, writing your tests the wrong way so they deterministically fail to catch edge cases.

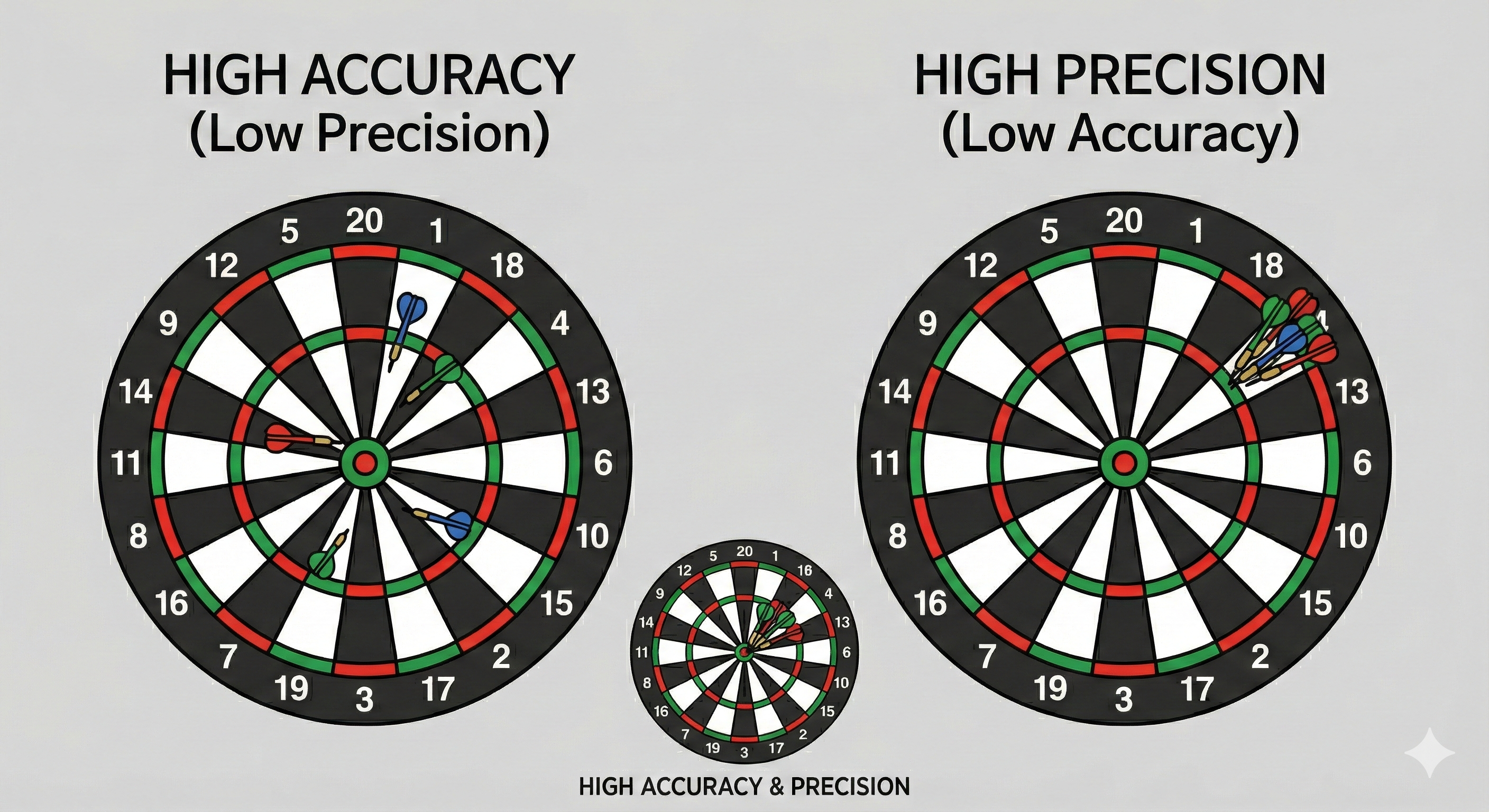

It comes down to the classic accuracy vs. precision analogy.

A good AI model, when properly prompted, is highly accurate but imprecise: it hits the target, but maybe not in the exact same spot every time. This is arguably just as good, if not better, than humans who offer high precision (the test runs exactly as written) but random accuracy (the test logic itself can be flawed). When you factor in the massive gains in productivity, the trade-off becomes more clear.

Boring is better?#

Once we climb the non-determinism wall, the next big question is how this mindblowing display of capabilities can actually be used to boost the entire software development cycle, and the industry overall.

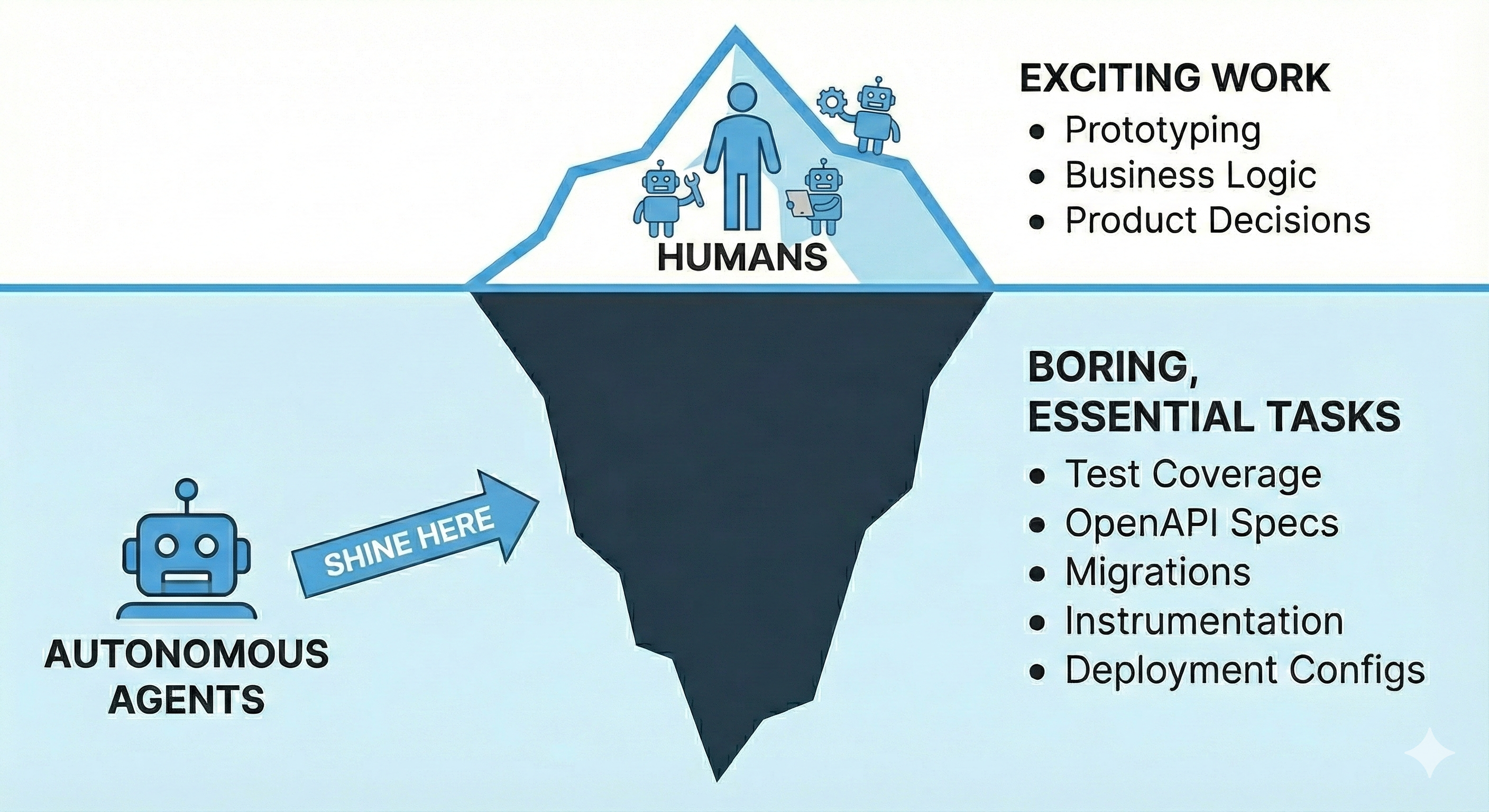

If we treat AI agents like software developers, the standard dynamic applies: senior engineers are more productive because they are highly autonomous, whereas junior engineers require constant supervision and course correction. I bet AI agents are going to fit this analogy perfectly. They will find their most useful niche where they can be truly autonomous, handling boring tasks that require little to no human-driven direction, as opposed to being the clever, multi-stage prototypers currently being hyped in all the magazines.

Agents that operate closer to ever-changing business needs (the prototypers) will have a much harder time creating infinite leverage. They will initially ramp up productivity, but eventually, they will hit the human bottleneck and plateau. There is a strict limit to the number of agents a human can effectively supervise and steer through complex business logic changes.

However, agents dealing with the “boring” parts in a highly autonomous way could dramatically improve the speed of the industry, taking care of everything else that doesn’t directly add business value, but is necessary to keep the party running.

What are those boring things that barely change over time? Here is a non-exhaustive list of examples:

Ensuring all endpoints are reflected in the OpenAPI spec file

Ensuring the code maintains 90% test coverage

Ensuring database migrations are backwards compatible

Ensuring the code is properly instrumented

Ensuring the code contains all required deployment configurations

Ensuring…

I believe that putting agents to work on these tasks, and having them review the work of others (both agents and humans) based on these same principles, is where the true, long-term leverage lies. Meanwhile, humans will remain in charge of the most complex problems and directional changes, supported by personal satellite agents like Claude Code or Codex.

Conclusion#

This technology is here to stay. It is not just going to accelerate software development to unprecedented levels, but it will also turn our current methods of evaluating software quality upside down.

Software engineers, now more than ever, need to start thinking like product managers and delegate the “boring” coding tasks to their new robot partners.

Thanks for reading!